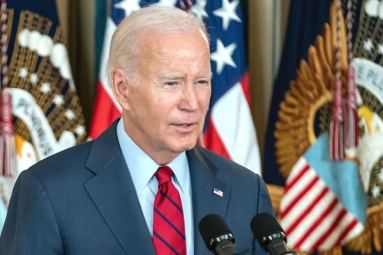

(Image source from: Twitter.com/WhiteHouse)

This month, there has been a surge in the use of artificial intelligence to create deepfakes on social media. These deepfakes have targeted several high-profile individuals and have raised concerns about the manipulation of media, especially in the context of the upcoming US election. The content that has gone viral includes pornographic images of Taylor Swift, robocalls with Joe Biden's voice, and videos of deceased children and teenagers recounting their own deaths. However, none of these were authentic.

While misleading audio and visuals created through artificial intelligence are not new, recent advancements in AI technology have made them more accessible to create and more difficult to identify. The numerous incidents that have been widely reported in just the first few weeks of 2024 have intensified worries about the impact of this technology, both among policymakers and the general public. The White House press secretary, Karine Jean-Pierre, expressed concern about the circulation of false images and assured that measures will be taken to address this issue.

Simultaneously, the prevalence of AI-generated fake content on social networks has tested the platforms' ability to regulate such content. For example, explicit deepfaked images of Taylor Swift gained millions of views on X, the platform formerly known as Twitter and now owned by Elon Musk.

Sites like X have strict rules against sharing synthetic, altered content. However, it took a considerable amount of time to remove the posts that depicted Taylor Swift. One post remained up for approximately 17 hours and garnered over 45 million views. This highlights the fact that these manipulated images can easily go viral before any action is taken to prevent them.

According to Henry Ajder, an AI expert and researcher who has provided guidance to governments on legislation against deepfake pornography, it is the responsibility of companies and regulators to put an end to the dissemination of obscene manipulated content. Ajder believes that all stakeholders, including search engines, tool providers, and social media platforms, should work together to create obstacles in the process of generating and sharing such content.

The incident involving Taylor Swift sparked anger among her devoted fans and other users on X. As a result, the phrase "protect Taylor Swift" trended on the social platform. While this is not the first time the singer has been subjected to explicit AI manipulation of her image, it is the first instance that has generated such widespread public outrage.

At the end of 2023, a review conducted by Bloomberg revealed that the top 10 deepfake websites hosted around 1,000 videos referencing "Taylor Swift." Online users engage in the act of superimposing her facial features onto the physique of adult film actors or present an option for customers to utilize AI technology to strip victims of their clothing.